{scrollbar:icons=false} |

|

|

|

Author: Traci St.Martin/Craig Stancl/Kevin Peterson/Scott Bauer/Sridhar Dwarkanath/Mike Turk |

Sign off |

Date |

Role |

CBIIT or Stakeholder Organization |

Reviewer's Comments (If disapproved indicate specific areas for improvement.) |

|---|---|---|---|---|

— |

— |

— |

— |

— |

The purpose of this document is to collect, analyze, and define high-level needs for and designed features of the National Cancer Institute Center for Biomedical Informatics and Information Technology (NCI CBIIT) caCORE LexEVS Release 5.1. The focus is on the functionalities proposed by the stakeholders and target users to make a better product. The use case documents show in detail how the features meet these needs.

The scope of this release of the LexEVS 5.1 is to support the Metathesaurus Browser project by enhancing search and sorting performance as well as RRF loader changes to more accurately reflect the data. In addition we will explore enhancements to the loader framework and provide a recommendation/implementation for value set/domain support.

Please view the LexEVS 5.1 Scope document.

Please view the LexEVS 5.1 GForge items.

Proposed technical solution to satisfy the following requirements:

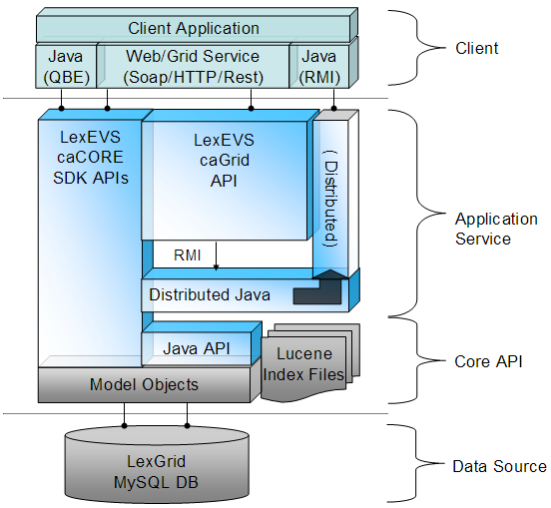

The LexEVS 5.1 infrastructure exhibits an n-tiered architecture with client interfaces, server components, domain objects, data sources, and back-end systems (Figure 1.1). This n-tiered system divides tasks or requests among different servers and data stores. This isolates the client from the details of where and how data is retrieved from different data stores.

The system also performs common tasks such as logging and provides a level of security for protected content. Clients (browsers, applications) receive information through designated application programming interfaces (APIs). Java applications communicate with back-end objects via domain objects packaged within the client.jar. Non-Java applications can communicate via SOAP (Simple Object Access Protocol) or REST (Representational State Transfer) services.

Most of the LexEVS API infrastructure is written in the Java programming language and leverages reusable, third-party components. The service infrastructure is composed of the following layers:

Application Service layer - accepts incoming requests from all public interfaces and translates them, as required, to Java calls in terms of the native LexEVS API. Non-SDK queries are invoked against the Distributed LexEVS API, which handles client authentication and acts as proxy to invoke the equivalent function against the LexEVS core Java API. The caGrid and SDK-generated services are optionally run in an application server separate from the Distributed LexEVS API.

The LexEVS caCORE SDK services work directly against the database, via Hibernate bindings, to resolve stored objects without intermediate translation of calls in terms of the LexEVS API. However, the LexEVS SDK services do still require access to metadata and security information stored by the Distributed and Core LexEVS API environment to resolve the specific database location for requested objects and to verify access to protected resources, respectively.

From the client prospective, the LexEVS services will function as "ports" accessible through the caGrid 1.3 service architectural model. LexEVS services will follow the caGrid architecture for analytical and data services. See the caGrid 1.3 documentation for architectural details: https://cabig.nci.nih.gov/workspaces/Architecture/caGrid/

Core API layer - underpins all LexEVS API requests. Search of pre-populated Lucene index files is used to evaluate query results before incurring cost of database access. Access to the LexGrid database is performed as required to populate returned objects using pooled connections.

Data Source layer---is responsible for storage and access to all data required to represent the objects returned through API invocation.

High Level Design Diagram

Lucene is very fast as a search engine. Given a text string, Lucene can find matching documents in huge indexes very fast. This is the purpose and strength of Lucene. Lucene is not, however, a database. Retrieving information from the documents that the search found as 'hits' is slow.

Consider this scenario: A user searches for 'heart' in the NCI MetaThesaurus. When Lucene does its search, it will return probably 50,000+ 'hits'. This search is done very fast. LexEVS previously would retrieve all of those documents to populate the ResolvedConceptReference. Retrieving this many documents from Lucene is slow.

The solution is to is lazy load the documents as needed. After the Lucene search is complete, we only store the Document Id. Then, when information from the document is needed, it is retrieved from the document. This is helpful in Iterator-type scenarios, where retrieval can be done one at a time.

As we move forward, it is important to keep current with the latest Lucene API. Not only is this important for performance reasons -- it will limit our ability to upgrade our Lucene dependencies if we rely on

deprecated methods.

We advertise our Searches as being 'extensions', but in reality it is very difficult (or impossible) for a use to create a plug-in type Search.

The Interface org.LexGrid.LexBIG.Extensions.Query.Search will be introduced. The purpose of this interface is to give users a plug-in type Interface to implement different search strategies. This interface will accept

a text query string and output a Lucene Query.

As with Searching, Sort algorithms are not currently easily extended. A well defined and 'Extension-ready' interface would allow users to add additional search functionality on demand, without rebuilding or recompiling.

The existing Interface org.LexGrid.LexBIG.Extensions.Query.Search will be expanded to allow for easy implementation and flexibility, allowing rapid creation of new Sort Algorithms and techniques.

Join EntityDescription when building AssociatedConcepts

The 'EntityDescription' field of 'Entity' is being retrieved with a separate SQL call. This will allow the building of AssociatedConcepts with minimal calls

to the database.

Furthermore, this will allow the 'EntityDescription' to be available without requiring the actual 'CodedEntry' to be resolved. For most usescases, this should enable users to resolve Graphs with 'CodedEntryDepth=0'. Avoiding any resolving of the CodedEntry will keep resolve times to a minimum.

Join EntryState when building CodedEntry

The EntryState is now populated with a seperate SQL SELECT query to the database. This results in one SELECT statement per CodedEntry returned - and there is potential for a large number of CodedEntries to be resolved at once. Populating this with a JOIN instead of a SELECT will be more efficient and not require additional unnecessary SELECT queries to the database.

Loads of the NCI MetaThesaurus RRF formatted data into the LexGrid model require a number of loader adjustments in order to accurately reflect the state of the data as it exists in the current RRF files. No model or API changes will be necessary to accommodate the data; changes will be made directly to to the loader.

The LexEVS Value Domain and Pick List service will provide ability to load Value Domain and Pick List Definitions into LexGrid repository and provides ability to apply user restrictions and dynamically resolve the definitions during run time. Both Value Domain and Pick List service are integrated part of LexEVS core API.

The LexEVS Value Domain and Pick List service will provide programmatic access to load Value Domain and Pick List Definitions using the domain objects that are available via the LexGrid logical model. The LexEVS Value Domain and Pick List service will provide ability to apply certain user restrictions (ex: pickListId, valueDomain URI etc) and dynamically resolve the Value Domain and Pick List definitions during the run time

The LexEVS Value Domain and Pick List Service meant to expose the API particularly for the Value Domain and Pick List elements of the LexGrid Logical Model. For more information on LexGrid model see http://informatics.mayo.edu\\

LexEVS already provides a set of loaders within an existing legacy framework which has served LexEVS developers well over the years. But as LexEVS has gained users, and requests for new loaders has grown , it was decided that a new Loader Framework should be developed. The new framework: provides classes and interfaces that are more modular and easier to extend; improved loader performance; allows dynamic loading of new loaders; is built upon proven open source technologies such as SpringBatch and Hibernate, and finally, the new Loader Framework code is completely independent of the current loader code in LexEVS so there will be no impact to current loaders.

LexEVS uses the BDA (Build and Deployment Automation) system to build and deploy artifacts. This build script that produces these artifacts and deploys them is kicked off via a build server (an instance of Anthill pro).

Include a link to the Core Product Dependency Matrix.

Include any new dependencies in the Core Product Dependency Matrix and summarize them here.

List any assumptions.

Specify how the solution architecture will satisfy the requirements. This should include high level descriptions of program logic (for example in structured English), identifying container services to be used, and so on.

Backgroud - Lucene Documents

Lucene stores information in Documents, and these Documents have Fields that are used to hold information. Each Document has a unique id.

For example, an index of People may be indexed in Lucene as:

Document: id 1 First Name: John Last Name: Doe Sex: Male Age: 45 Document: id 2 First Name: Jane Last Name: Doe Sex: Female Age: 40 ... etc. |

LexEVS stores information about Entities in this way. Property names and values, as well as Qualifiers, Language, and various other information about the Entity are held in Lucene indexes.

Backgronud - Querying Lucene

Lucene provides a Query mechanism to search through the indexed documents. Given a search query, Lucene will provide the Document id and the score of the match (Lucene assigns every match a 'score', depending on the strength of the match given the query).

So, if the above index is queried for "First Name = Jane AND Last Name = Doe", the result will be the Document id of the match (2), and the score of the match (a float number, usually between 1 and 10).

Notice that none of the other information is returned, such as Sex or Age. It is useful for that extra information to be there, because if it exists in the Lucene indexes we do not have to make a database query for it. BUT, retrieving data from Lucene Documents is expensive, just as retrieving data from a database would be.

Document: id 1 Code: C12345 Name: Heart Document: id 2 Code: C67890 Name: Foot Document: id 3 Code: C98765 Name: Heart Attack |

If a user constructs a Query (Name = Heart*), the query will return with the matching Document ids (1 and 2). Previously, LexEVS would immediately retrieve the 'Code' and 'Name' fields from the matches, and use them to construct the results that would be ultimately returned to the user. This does not scale well, especially for general queries in large ontologies. In a large ontology, a Query of (Name = Heart*) may match tens of thousands of Documents. Retrieving the information from all these Documents is a significant performance concern.

Instead of retrieving the information up front, LexEVS will simply store the Document id for later use. When this information is actually needed by the user (for example, the information needs to be displayed), it is retrieved on demand.

To allow users to plug in custom search algorithms, the LexEVS Extension framework needed to be extended to include Searches.

The org.LexGrid.LexBIG.Extensions.Extendable.Search interface consists of one method to be implemented:

Class: |

org.LexGrid.LexBIG.Extensions.Extendable.Search |

|---|---|

Method: |

public org.apache.lucene.search.Query buildQuery(String searchText) |

Description: |

Given a String search string, build a Query object to match indexed Lucene Documents |

This enables the user to construct any type of Query given search text. Wildcards may be added, search terms may be grouped, etc.

AND vs. OR

Previously, for most search algorithms Lucene applied an 'OR' to the terms if multiple terms were input as search text. For example, a search of 'heart attack' would match all documents containing 'heart' OR all documents containing 'attack'. This lead to non-intuitive results being returned to the user. Changing Lucene to default to an 'AND' type strategy will increase search precision and in most cases shrink the amount of results returned for a given query, which will in turn increase overall performance.

Algorithms

More precice DoubleMetaphoneQuery

DoubleMetaphoneQueries enable the user to input incorrectly spelled search text, while still returning results. Because this is a 'fuzzy' search, it is important to structure the Query in a way that the most appropriate results are returned to the user first.

For example, the Metaphone computed value for "Breast" and "Prostrate" is the same. Given the search term "Breast", both "Breast" and "Prostrate" will match with exactly the same score. Technically, this is correct behavior, but to the end user this is not desirable. To overcome this, we have introduced a new query, WeightedDoubleMetaphoneQuery.

WeightedDoubleMetaphoneQuery

This algorithm does not automatically assume that the user has spelled the terms incorrectly. Searches are also based on the actual text that the user has input, along with the Metaphone value.

Again, if the user input "Breast", the query will still match "Breast" and "Prostrate", but "Breast" will have a higher match score, because the actual user text is considered. This will add a greater precision to this fuzzy-type query.

Algorithm:

get: user text input 2: total score = 0 3: metaphone score = 0 4: actual score = 0 5: metaphone value = lucene.computeMetaphoneValue(user text input) 6: metaphone score = lucene.scoreMetaphoneValue(metaphone value) 7: actual score = lucene.score(user text input) 8: total score = metaphone score + actual score 9: halt |

Case-insensitive substring

SubStringSearch - This algorithm is intended to find substrings within a large string. For example:

"with a heart attack"

Will match:

"The patient with a heart attack was seen today".

Also, a leading and trailing wildcard will be added, so

"th a heart atta"

Will also match:

"The patient with a heart attack was seen today".

Algorithm:

get: user text input 2: user text input = '*' + user text input + '*' 3: score = lucene.score(user text input) 4: halt |

Sorting matched results is important part of interacting with the LexEVS API. Allowing users to plug in customized Sort algorithms helps LexEVS to be more flexible to more groups of users. To implement a Sorting algorithm, a user must implement the org.LexGrid.LexBIG.Extensions.Extendable.Sort Interface.

Class: |

org.LexGrid.LexBIG.Extensions.Extendable.Sort |

|---|---|

Method: |

public <T> Comparator<T> getComparatorForSearchClass(Class<T> searchClass) throws LBParameterException |

Method: |

public boolean isSortValidForClass(Class<?> clazz); |

{"Heart", "Heart Failure", "Heart Attack", "Arm", "Finger", ...}

|

As described earlier, all results are by default sorted by Lucene score, so if we limit the result set to the top 3, the result is:

{"Heart", "Heart Failure", "Heart Attack"}

|

The restricted set can then be 'Post' sorted - and because the result set has be limited to a reasonable number of matches, sorting and retrieval time can be minimized.

Algorithm:

1: get: Sort requested by user 2: get: Context sort is being applied to 3: if: sort is not valid for Context halt 4: else: 5: get: Class to be sorted on 6: if: sort is not valid for Class halt 7: get: Comparator for Sort - given (Class to be sorted on) 8: sort results using Comparator for Sort 9: halt |

The n+1 SELECTS Problem refers to how information can optimally be retrieved from the database, preferably using as few queries as possible. This is desirable because:

To avoid this, a JOIN query can be used.

Given two database tables, retrieve the Code, Name, and Qualifier for each Code

Table Codes

Code |

Name |

|---|---|

C01234 |

Heart |

C98765 |

Heart Attack |

Table Qualifiers

Code |

Qualifier |

|---|---|

C01234 |

isAnOrgan |

C98765 |

isADisease |

SELECT * FROM Codes |

Results in:

Code |

Name |

|---|---|

C01234 |

Heart |

C98765 |

Heart Attack |

To get the Qualifiers, separate SELECTs must be used for each.

SELECT * FROM Qualifiers where Code = C01234 And SELECT * FROM Qualifiers where Code = C98765 |

This sequence results in 1 Query to retrieve the data from the Codes table, and then n Queries from the Qualifiers table. This results in n+1 total Queries.

Given two database tables, retrieve the Code, Name, and Qualifier for each Code

Table Codes

Code |

Name |

|---|---|

C01234 |

Heart |

C98765 |

Heart Attack |

Table Qualifiers

Code |

Qualifier |

|---|---|

C01234 |

isAnOrgan |

C98765 |

isADisease |

SELECT * FROM Codes JOIN Qualifiers ON Code |

Results in:

Code |

Name |

Qualifier |

|---|---|---|

C01234 |

Heart |

isAnOrgan |

C98765 |

Heart Attack |

isADisease |

Because of the JOIN, only one Query is needed to retrieve all of the data from the database.

Although sometimes obvious, n+1 queries can remain in a system undetected until scaling problems are noticed.

In LexEVS there were 3 n+1 SELECT queries fixed:

Loads of the NCI MetaThesaurus RRF formatted data into the LexGrid model require a number of adjustments in order to accurately reflect the state of the data as it exists in the current RRF files.

Most data elements will be loaded as either properties or property qualifiers:

A few will be loaded as qualifiers to associations.

No new API retrieval methods will be implemented in the scope of LexEVS 5.1. However, some may be required in the scope of 6.0 for any mapping elements implemented as new model elements or model extensions to LexGrid. No changes to user interfaces will occur. Service methods for loading these elements will be consistent with the new Spring Batch loader framework.

Problem:

REL and RELA column elements from the RRF source need to be connected.

Currently these are loaded as separate relationships preventing the user from connecting to the REL/RELA combinations that actually occur in the NCI-META (e.g. RELA may be different for same REL value in different sources).

Requirement:

A single relationship should be loaded for a REL/RELA combination for a particular SAB between two CUIs.

Solution:

Since RELA type RRF elements have been defined as relationship names specific to sources and not independent relationships themselves, these elements will be loaded as association qualifiers in the LexGrid model.

Problem and Requirement:

User is unable to distinguish individual relationships from one source or another. The same association "entity" exists only once but has two "source" qualifiers.

User is unable to distinguish the AUI1/STYPE1 and AUI2/STYPE2 which gives us the information about what source data structures are actually being connected by MRREL entries. Users also need the ability to associate AUI/STYPE fields with SAB.

Users sole choice for rendering a relationship in terms of the strings on either side is to use preferred concept names.

Proposed Solution:

Propose AUI to AUI - the way CUI to CUI are currently handled in the implementation.

Propose entity to entity relationship - will still have to account for CUI to CUI relationships.

Load each unique RUI (would be quite large). They would need to be listed as supported association (this is not traditional how it is used).

Load supporting column elements from MRREL.RRF including contents of:

AUI1, STYPE1, AUI2, STYPE2, SRUI, SAB, RG, SUPPRESS, CVF, RUI

These will be available as elements of the overriding Metathesaurus Association and loaded as association qualifiers

Problem:

Self Referencing Relationships (CUI1 = CUI2) cannot be fully represented in our model. Previously, these were loaded as PropertyLinks. This fit into the LexEVS model well, but left out important RRF information. Most notably, PropertyLinks cannot contain Qualifiers like normal relations can. Because of the increased number of Qualifiers that are required to be placed on relations, much information would be lost representing these relations as PropertyLinks

Solution:

Do not treat a CUI1 = CUI2 relationships differently than a CUI1 != CUI2 relationship. For API and query purposes, qualify these relationships with a 'selfReferencing=true' Qualifier. In this way, we can still avoid cycles in the API, but maintain all relevant Qualifier information in the relation.

Problem:

MRSAT.RRF is not loaded but only accessed for given preferred term algorithms. This data should be loaded as concept properties (STYPE=CUI), properties on properties (STYPE=AUI, SAUI, CODE, SCUI, SDUI), qualifiers on associations (STYPE=RUI,SRUI). Some complexity may arise as concept properties can have additional qualifiers, but property-properties cannot and association-qualifiers cannot.

Requirement:

If the STYPE is something other than RUI or SRUI, you can load

that row as an entity property. The fields you'd want to capture

are:

CUI - We use this as the entityCode and is loaded as such in the table.

METAUI - load as a propertyQualifier (name=METAUI, value)

STYPE - load as a propertyQualifier (name=STYPE, value)

ATUI - load as propertyId

ATN - load as property name

SAB - load as a propertyQualifier (typeName=source)

ATV- load as a propertyValue

SUPPRESS - load as propertyQualifier if value != N

Problem:

SAB specific ranking of representational form in MRRANK is not exposed to the user (used in an underlying ranking and specifying of preferred presentations for a given concept)

Requirement:

Load elements of MRRANK so that they are available to the user.

Proposed Solution:

Load MRRANK as property qualifier on Presentation type property with the property Name of "mrrank."

Retrieval:

Available in current LexEVS api

Problem:

MRSAB.RRF file data is not loaded or is otherwise unavailable to the user.

Requirement:

Load MRSAB.RRF file data as metadata

Implemented Solution:

Entire content of each row of MRSAB file is loaded as metadata to an external xml file with tags created from column names and value inserted between tags as is appropriate

Problem:

MRMAP.RRF source load is not supported in current load. Currently this RRF file is not populated in NCI Metathesaurus distributions. Mapping is not explicitly supported in the LexGrid Model.

Requirement:

Load MRMAP data.

Solution:

To be evaluated for a load to current model elements or possible new model mapping elements. The general agreement is that this is more appropriately implemented in 6.0.

Problem:

HCD is loaded as a property on the presentation but the SAB isn't associated with it so we do not know the source of the HCD. (only look at row that has HCD field populated)

Path to Root, (PTR) is also not loaded, but is instead used to determine path to root operations in LexEVS.

Requirement:

These elements need to be loaded and available from the LexEVS api

Solution:

Load HCD associated field SAB as property qualifier when HCD is present. Load PTR as property.

Problem:

MRDOC contains metadata unavailable to the user. It is not loaded by LexEVS.

Requirement:

This metadata will be made available to the user.

Solution:

MRDOC's column names and content will be processed as tag/value mappings to a metadata file.

Problem:

Some values from each row are not loaded by LexEVS.

Requirement:

AUI should be loaded to connect it with the presentation

ATUI, SUPPRESS, CVF, SATAUI should be loaded and exposed to the user.

ATUI, SUPPRESS, CVF, SATAUI, column values will be loaded as property qualifiers on the Definition type property derived from MRDEF column.

Problem:

Some elements from the columns of MRCONSO.RRF are not loaded by LexEVS.

Requirement:

Load LUI, SUI, SAUI, SDUI, SUPPRESS, CVS fields and expose to the user.

Solution:

All noted values will be loaded as property qualifiers.

The LexEVS Value Domain and Pick List service will provide ability to load Value Domain and Pick List Definitions into LexGrid repository and provides ability to apply user restrictions and dynamically resolve the definitions during run time. Both Value Domain and Pick List service are integrated part of LexEVS core API.

The LexEVS Value Domain and Pick List service will provide programmatic access to load Value Domain and Pick List Definitions using the domain objects that are available via the LexGrid logical model.

The LexEVS Value Domain and Pick List service will provide ability to apply certain user restrictions (ex: pickListId, valueDomain URI etc) and dynamically resolve the Value Domain and Pick List definitions during the run time.

The LexEVS Value Domain and Pick List Service meant to expose the API particularly for the Value Domain and Pick List elements of the LexGrid Logical Model. For more information on LexGrid model see http://informatics.mayo.edu/

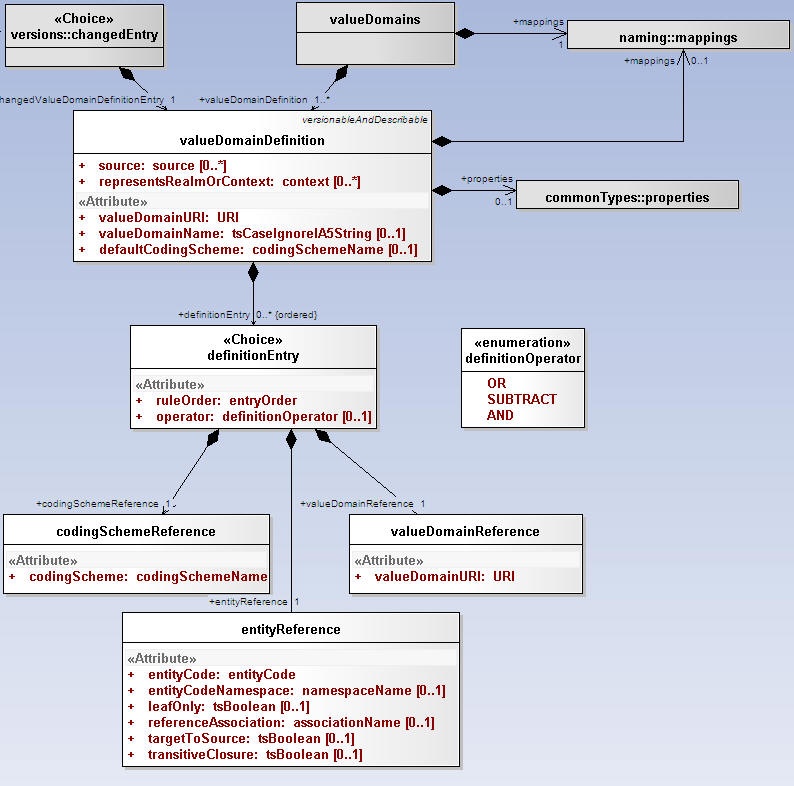

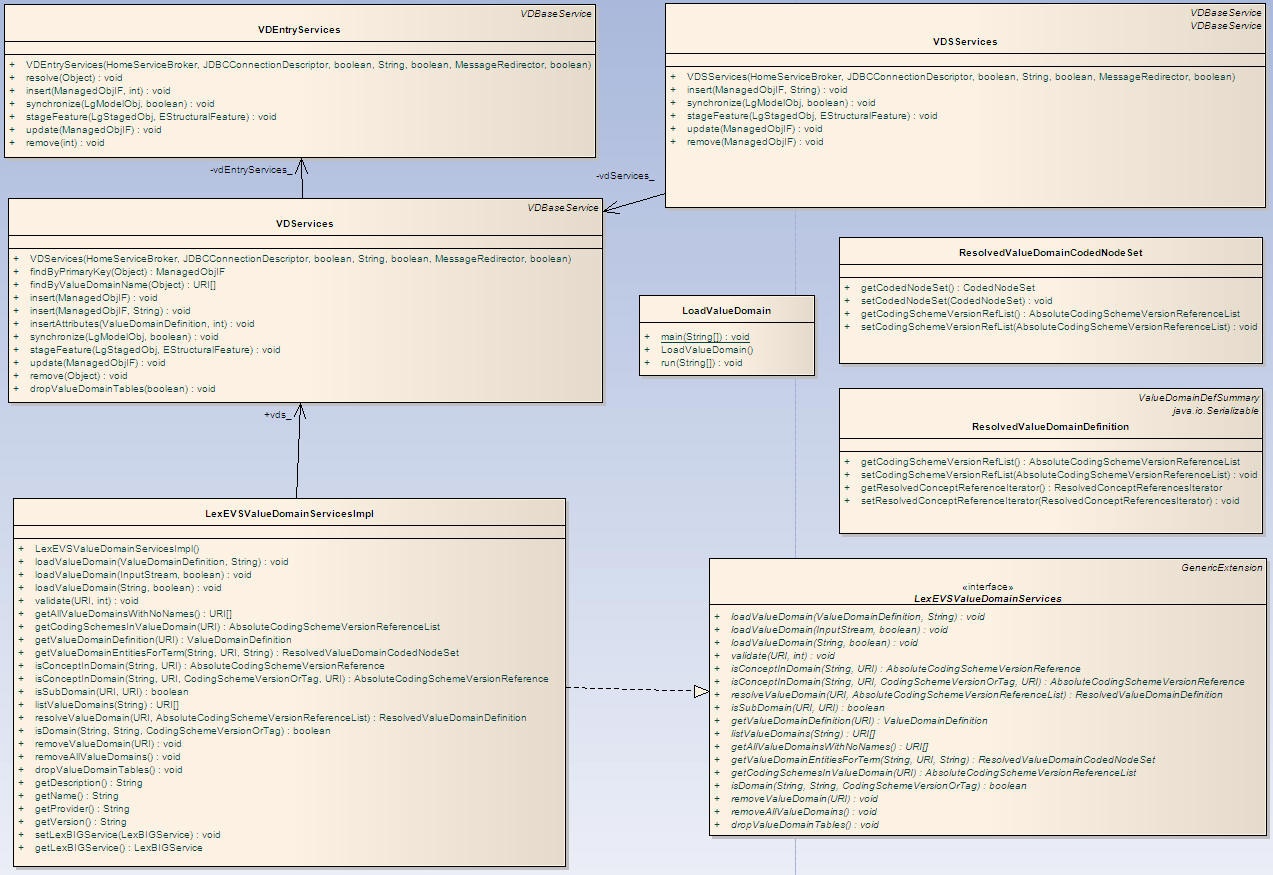

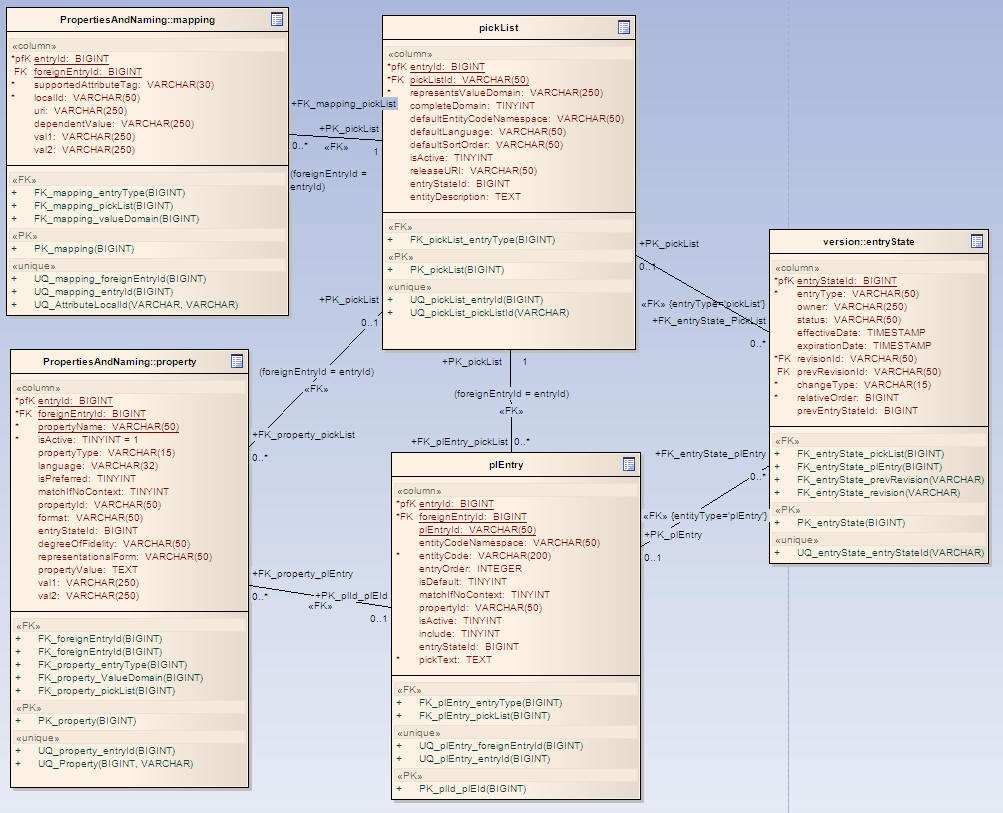

Here is a UML representation of Value Domain within LexGrid 200901 model.

A definition of a given value domain. A value domain can be a simple description with no associated value domain entries, or it can consist of one or more definitionEntries that resolve to an enumerated list of entityCodes when applied to one or more codingScheme versions.

Attributes of Value Domain Definition :

Source: The local identifiers of the source(s) of this property. Must match a local id of a supportedSource in the corresponding mappings section.

representsRealmOrContext: The local identifiers of the context(s) in which this value domain applies. Must match a local id of a supportedContext in the corresponding mappings section.

valueDomainURI: The URI of this value domain.

valueDomainName: The name of this domain, if any.

defaultCodingScheme: Local name of the primary coding scheme from which the domain is drawn. defaultCodingScheme must match a local id of a supportedCodingScheme in the mappings section.

A reference to an entry code, a coding scheme or another value domain along with the instructions about how the reference is applied. Definition entrys are applied in entryOrder, with each successive entry either adding to or subtracting from the final set of entity codes.

Attributes of Value Domain Definition Entry :

ruleOrder: The unique identifier of the definition entry within the definition as well as the relative order in which this entry should be applied

operator: How this entry is to be applied to the value domain

A reference to all of the entity codes in a given coding scheme.

Attributes of Coding Scheme Reference :

codingScheme: The local identifier of the coding scheme that the entity codes are drawn from . codingSchemeName must match a local id of a supportedCodingScheme in the mappings section.

A reference to the set of codes defined in another value domain.

Attributes of Value Domain Reference :

valueDomainURI: The URI of the value domain to apply the operator to. This value domain may be contained within the local service or may need to be resolved externally.

A reference to an entityCode and/or one or more entityCodes that have a relationship to the specified entity code.

Attributes of Entity Reference :

entityCode: The entity code being referenced.

entityCodeNamespace: Local identifier of the namespace of the entityCode. entityCodeNamespace must match a local id of a supportedNamespace in the corresponding mappings section. If omitted, the URI of the defaultCodingScheme will be used as the URI of the entity code.

leafOnly: If true and referenceAssociation is supplied and referenceAssociation is defined as transitive, include all entity codes that are "leaves" in transitive closure of referenceAssociation as applied to entity code. Default: false

referenceAssociation: The local identifier of an association that appears in the native relations collection in the default coding scheme. This association is used to describe a set of entity codes. If absent, only the entityCode itself is included in this definition.

targetToSource: If true and referenceAssociation is supplied, navigate from entityCode as the association target to the corresponding sources. If transitiveClosure is true and the referenceAssociation is transitive, include all the ancestors in the list rather than just the direct "parents" (sources).

transitiveClosure: If true and referenceAssociation is supplied and referenceAssociation is defined as transitive, include all entity codes that belong to transitive closure of referenceAssociation as applied to entity code. Default: false

The description of how a given definition entry is applied.

Attributes of Definition Operator :

OR: Add the set of entityCodes described by the currentEntity to the value domain. (logical OR)

SUBTRACT: Subtract (remove) the set of entityCodes described by the currentEntity to the value domain. (logical NAND)

AND: Only include the entity codes that are both in the value domain and the definition entry. (logical AND)

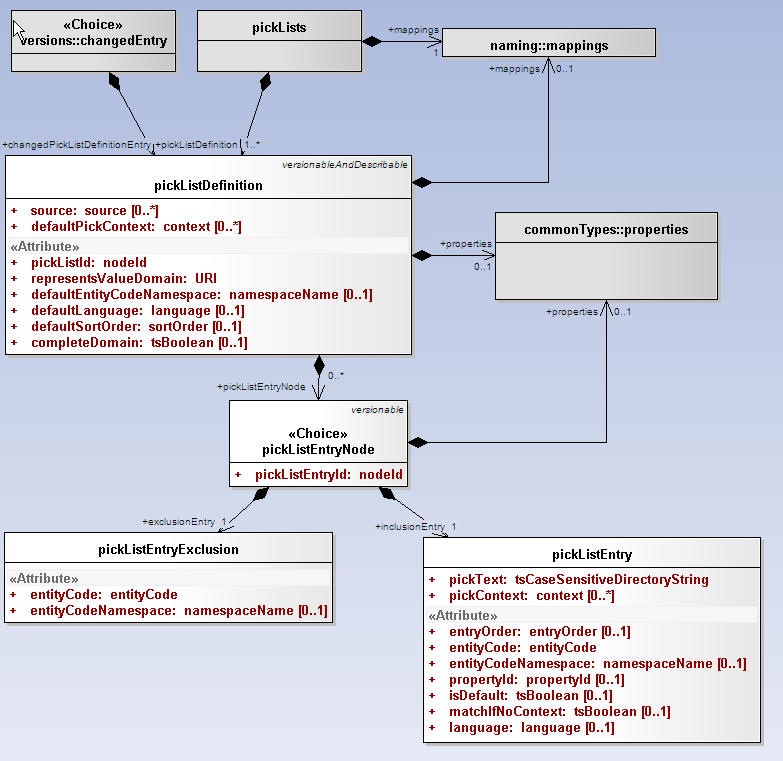

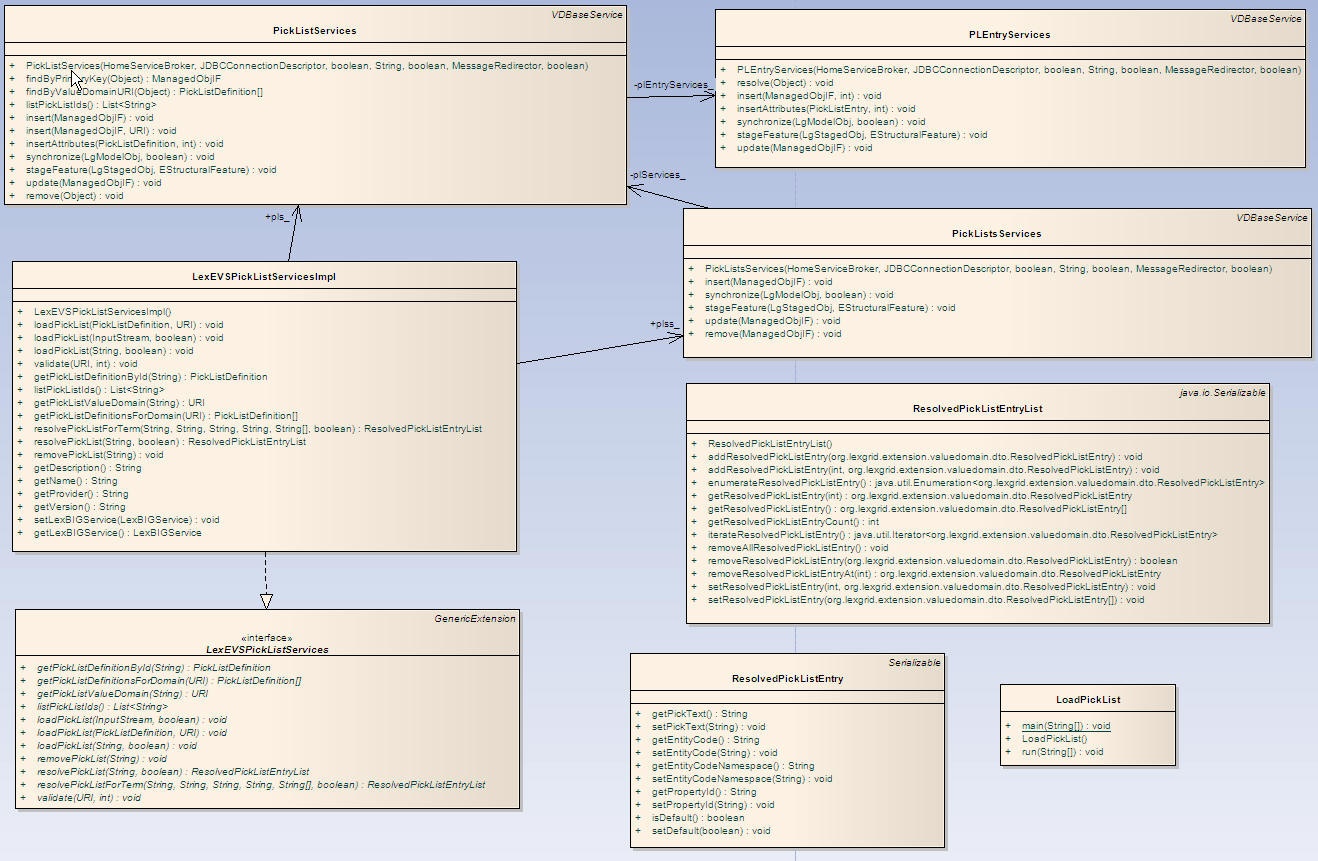

Here is a UML representation of Pick List within LexGrid 200901 model:

An ordered list of entity codes and corresponding presentations drawn from a value domain.

Attributes of Pick List Definition :

Source: The local identifiers of the source(s) of this pick list definition. Must match a local id of a supportedSource in the corresponding mappings section.

pickListId: An identifier that uniquely names this list within the context of the collection.

representsValueDomain: The URI of the value domain definition that is represented by this pick list

defaultEntityCodeNamespace: Local name of the namespace to which the entry codes in this list belong. defaultEntityCodeNamespace must match a local id of a supportedNamespace in the mappings section.

defaultLanguage: The local identifier of the language that is used to generate the text of this pick list if not otherwise specified. Note that this language does NOT necessarily have any coorelation with the language of a pickListEntry itself or the language of the target user. defaultLanguage must match a local id of a supportedLanguage in the mappings section.

defaultSortOrder: The local identifier of a sort order that is used as the default in the definition of the pick list

defaultPickContext: The local identifiers of the context used in the definition of the pick list.

completeDomain: True means that this pick list should represent all of the entries in the domain. Any active entity codes that aren't in the specific pick list entries are added to the end, using the designations identified by the defaultLanguage, defaultSortOrder and defaultPickContext. Default: false

An inclusion (pickListEntry) or exclusion (pickListEntryExclusion) in a pick list definition

Attributes of Pick List Entry Node :

pickListEntryId: Unique identifier of this node within the list.

An entity code and corresponding textual representation.

Attributes of Pick List Entry:

pickText: The text that represents this node in the pick list. Some business rules may require that this string match a presentation associated with the entityCode

pickContext: The local identifiers of the context(s) in which this entry applies. pickContext must match a local id of a supportedContext in the mappings section

entryOrder: Relative order of this entry in the list. pickListEntries without a supplied order follow the all entries with an order, and the order is not defined.

entityCode: Entity code associated with this entry.

entityCodeNamespace: Local identifier of the namespace of the entity code if different than the pickListDefinition defaultEntityCodeNamespace. entityCodeNamespace must match a local id of a supportedNamespace in the mappings section.

propertyId: The property identifier associated with the entityCode and entityCodeNamespace that the pickText was derived from. If absent, the pick text can be anything. Some terminologies may have business rules requiring this attribute to be present.

isDefault: True means that this is the default entry for the supplied language and context.

matchIfNoContext: True means that this entry can be used if no contexts are supplied, even though pickContext ispresent.

Language: The local name of the language to be used when the application/user supplies a selection language matches. If absent, this matches all languages. language must match a local id od of a supportedLanguage in the mappings section.

An entity code that is explicitly excluded from a pick list.

Attributes of Pick List Entry Exclusion:

entityCode: Entity code associated with this entry.

entityCodeNamespace: Local identifier of the namespace of the entity code if different than the pickListDefinition defaultEntityCodeNamespace. entityCodeNamespace must match a local id of a supportedNamespace in the mappings section.

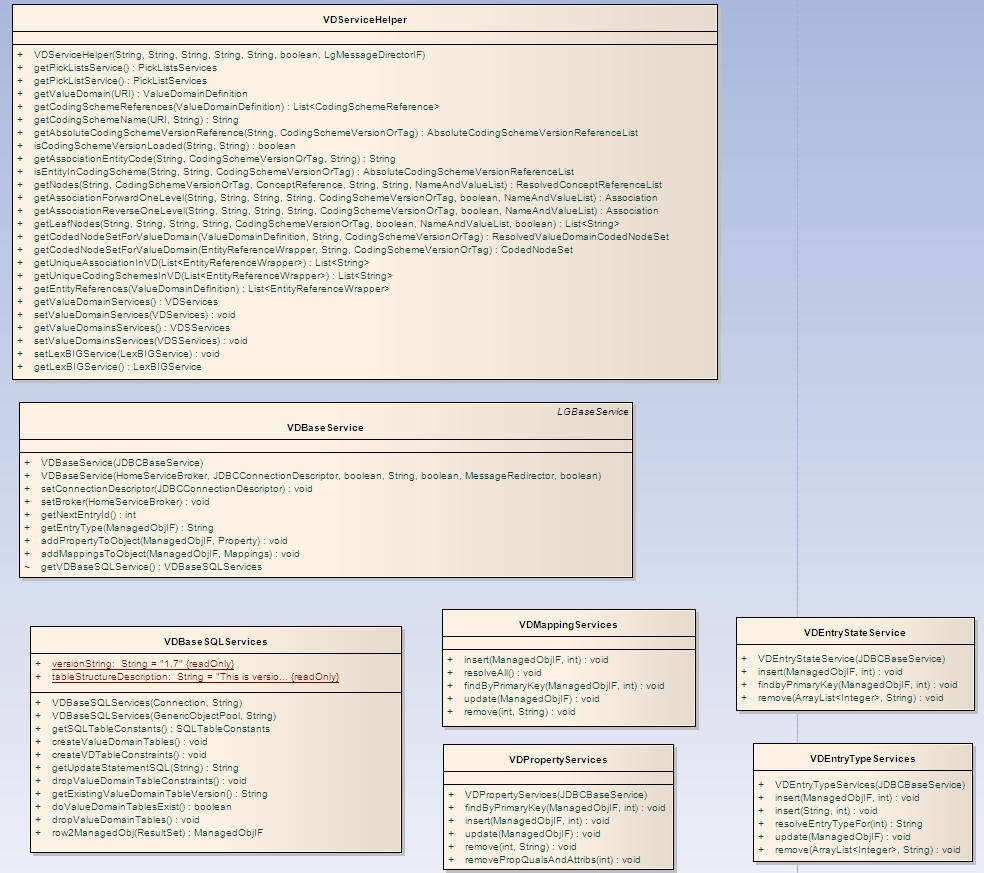

These are the classes that are used commonly across Value Domain and Pick List implementation.

Class Name |

Description |

|---|---|

VDEntryTypeServices |

Class to handle Entry Type objects to and fro database . |

VDEntryStateServices |

Class to handle Entry State objects to and fro database. |

VDPropertyServices |

Class to handle Property objects to and fro database. |

VDMappingServices |

Class to handle supported Mappings objects to and fro database. |

VDServiceHelper |

Helper class containing methods that are commonly used. |

VDBaseSQLServices |

Class to handle SQL Services. |

VDBaseService |

Base service class to handle all Value Domain and Pick List related objects to and from database. |

Classes that implement LexEVS Value Domain API

Class Name |

Description |

|---|---|

VDSServices |

Class to handle list of Value Domain Definitions Object to and fro database |

VDServices |

Class to handle individual Value Domain Definition objects to and fro database. |

VDEntryServices |

Class to handle Value Domain Entry objects to and fro database. |

LexEVSValueDomainServices |

Primary interface for LexEVS Value Domain API |

LexEVSValueDomainServicesImpl |

Implementation of LexEVSValueDomainServices which is primary interface for LexEVS Value Domain API. |

LoadValueDomain |

Imports the value Domain Definitions in the source file, provided in LexGrid canonical format, to the LexBIG repository. |

ResolvedValueDomainCodedNodeSet |

Contains coding scheme version reference list that was used to resolve the value domain and the coded node set. |

ResolvedValueDomainDefinition |

A resolved Value Domain definition containing the coding scheme version reference list that was used to resolve the value domain and an iterator for resolved concepts. |

Classes that implements LexEVS Pick List API

Class Name |

Description |

|---|---|

PickListsServices |

Class to handle list of Pick List Definitions. |

PickListServices |

Class to handle individual Pick List Definition objects to and fro database. |

PLEntryServices |

Class to handle Pick List Entry objects to and fro database. |

LexEVSPickListServices |

Primary interface for LexEVS Pick List API. |

LexEVSPickListServicesImpl |

Implementation of LexEVSPickListServices which is primary interface for LexEVS Pick List API. |

LoadPickList |

Imports the Pick List Definitions in the source file, provided in LexGrid canonical format, to the LexBIG repository. |

ResolvedPickListEntyList |

Class to hold list of resolved pick list entries. |

ResolvedPickListEntry |

Bean for resolved pick list entries. |

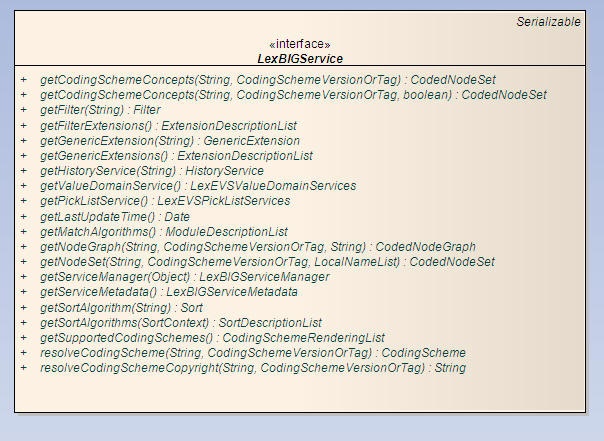

An interface to LexEVS Value Domain and Pick List Services could be obtained using an instance of LexBigService.

Method Name |

Description |

|---|---|

getValueDomainService() |

Returns an interface to LexEVS Value Domain API |

getPickListService() |

Returns an interface to LexEVS Pick List API. |

An interface to LexEVS Value Domain and Pick List Services could be obtained using an instance of LexBigService.

Information |

getValueDomainService() |

|---|---|

Description: |

Returns an interface to LexEVS Value Domain API. |

Input: |

none |

Output: |

org.lexgrid.valuedomain.LexEVSValueDomainServices |

Exception: |

LBException |

Implementation Details: |

Implementation: |

Information |

getPickListService() |

|---|---|

Description: |

Returns an interface to LexEVS Pick List API. |

Input: |

none |

Output: |

org.lexgrid valuedomain.LexEVSPickListServices |

Exception: |

LBException |

Implementation Details: |

Implementation: |

There are three methods that could be used to load Value Domain Definitions :

Information |

loadValueDomain(ValueDomainDefinition vddef, String systemReleaseURI) |

|---|---|

Description: |

Loads supplied valueDomainDefinition object |

Input: |

org.LexGrid.emf.valueDomains.ValueDomainDefinition, |

Output: |

none |

Exception: |

LBException |

Implementation Details: |

Implementation: |

Information |

loadValueDomain(InputStream inputStream,boolean failOnAllErrors)) |

|---|---|

Description: |

Loads valueDomainDefinitions found in inputStream |

Input: |

java.io.InputStream |

Output: |

none |

Exception: |

Exception |

Implementation Details: |

Implementation: |

Information |

loadValueDomain(String xmlFileLocation, boolean failOnAllErrors) |

|

|---|---|---|

Description: |

Loads valueDomainDefinitions found in input xml file |

|

Input: |

java.lang.String |

|

Output: |

none |

|

Exception: |

Exception |

|

Implementation Details: |

Implementation: |

|

Information |

validate(URI uri, int valicationLevel) throws LBParameterException |

|---|---|

Description: |

Perform validation of the candidate resource without loading data. |

Input: |

java.net.URI |

Output: |

none |

Exception: |

Org.LexGrid.LexBIG.Exceptions.LBParameterException |

Implementation Details: |

Implementation: |

Information |

isConceptInDomain(String entityCode, URI valueDomainURI) |

|---|---|

Description: |

Determine if the supplied entity code is a valid result for the supplied value domain and, if it is, return the particular codingSchemeVersion that was used. |

Input: |

java.lang.String, |

Output: |

org.LexGrid.LexBIG.DataModel.Core. AbsoluteCodingSchemeVersionReference |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

isConceptInDomain(String entityCode, URI entityCodeNamespace, CodingSchemeVersionOrTag csvt, URI valueDomainURI) |

|---|---|

Description: |

Similar to previous method, this method determine if the supplied entity code and entity namespace is a valid result for the supplied value domain when resolved against supplied Coding Scheme Version or Tag. |

Input: |

java.lang.String, |

Output: |

org.LexGrid.LexBIG.DataModel.Core. AbsoluteCodingSchemeVersionReference |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

resolveValueDomain(URI valueDomainURI, AbsoluteCodingSchemeVersionReferenceList csVersionList) |

|---|---|

Description: |

Resolve a value domain using the supplied set of coding scheme versions. |

Input: |

java.net.URI, |

Output: |

org.lexgrid.valuedomain.dto.ResolvedValueDomainDefinition |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

isSubDomain(URI childValueDomainURI, URI parentValueDomainURI) |

|---|---|

Description: |

Check whether childValueDomainURI is a child of parentValueDomainURI. |

Input: |

java.net.URI, |

Output: |

boolean |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

getValueDomainDefinition(URI valueDomainURI) |

|---|---|

Description: |

Returns value domain definition for supplied value domain URI. |

Input: |

java.net.URI |

Output: |

org.LexGrid.emf.valueDomains.ValueDomainDefinition |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

listValueDomains(String valueDomainName) |

|---|---|

Description: |

Return the URI's for the value domain definition(s) for the supplied domain name. If the name is null, returns everything. If the name is not null, returns the value domain(s) that have the assigned name. |

Input: |

java.lang.String |

Output: |

java.net.URI[] |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

getAllValueDomainsWithNoNames() |

|---|---|

Description: |

Return the URI's of all unnamed value domain definition(s). |

Input: |

none |

Output: |

java.net.URI[] |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

getValueDomainEntitiesForTerm(String term, URI valueDomainURI, String matchAlgorithm) |

|---|---|

Description: |

Resolves the value domain supplied and restricts to the term and matchAlgorith supplied. Return object ResolvedValueDomainCodedNodeSet contains the codingScheme URI and Version that was used to resolve and the CodedNodeSet. |

Input: |

java.lang.String, |

Output: |

org.lexgrid.valuedomain.dto.ResolvedValueDomainCodedNodeSet |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

getCodingSchemesInValueDomain(URI valueDomainURI) |

|---|---|

Description: |

Returns list of coding scheme summary that is referenced by the supplied value domain. |

Input: |

java.net.URI |

Output: |

org.LexGrid.LexBIG.DataModel.Collections. AbsoluteCodingSchemeVersionReferenceList |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

isDomain(String entityCode, String codingSchemeName, CodingSchemeVersionOrTag csvt) |

|---|---|

Description: |

Determine if the supplied entity code is of type valueDomain in supplied coding scheme and, if it is, return the true, otherwise return false. |

Input: |

java.lang.String, |

Output: |

boolean |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

removeValueDomain(URI valueDomainURI) |

|---|---|

Description: |

Removes supplied value domain definition from the system. |

Input: |

java.net.URI |

Output: |

none |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException, |

Implementation Details: |

Implementation: |

Information |

removeAllValueDomains() |

|---|---|

Description: |

Removes all value domain definitions from the system. |

Input: |

none |

Output: |

none |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException, |

Implementation Details: |

Implementation: |

Information |

dropValueDomainTables() |

|---|---|

Description: |

Drops value domain tables only if there are no value domain and pick list entries. |

Input: |

none |

Output: |

none |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException, |

Implementation Details: |

Implementation: |

There are three methods that could be used to load Pick List Definitions :

Information |

loadPickList(PickListDefinition pldef, String systemReleaseURI) |

|---|---|

Description: |

Loads supplied Pick List Definition object |

Input: |

org.LexGrid.emf.valueDomains.PickListDefinition, |

Output: |

none |

Exception: |

LBException |

Implementation Details: |

Implementation: |

Information |

loadPickList(InputStream inputStream, boolean failOnAllErrors) |

|---|---|

Description: |

Loads Pick List Definitions found in inputStream |

Input: |

java.io.InputStream |

Output: |

none |

Exception: |

Exception |

Implementation Details: |

Implementation: |

Information |

loadPickList (String xmlFileLocation, boolean failOnAllErrors) |

|---|---|

Description: |

Loads Pick List Definitions found in input xml file |

Input: |

java.lang.String |

Output: |

none |

Exception: |

Exception |

Implementation Details: |

Implementation: |

Information |

validate(URI uri, int valicationLevel) throws LBParameterException |

|---|---|

Description: |

Perform validation of the candidate resource without loading data. |

Input: |

java.net.URI |

Output: |

none |

Exception: |

Org.LexGrid.LexBIG.Exceptions.LBParameterException |

Implementation Details: |

Implementation: |

Information |

getPickListDefinitionById(String pickListId) |

|---|---|

Description: |

Returns pickList definition for supplied pickListId. |

Input: |

java.lang.String |

Output: |

org.LexGrid.emf.valueDomains.PickListDefinition |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

getPickListDefinitionsForDomain(URI valueDomainURI) |

|---|---|

Description: |

Returns all the pickList definitions that represents supplied valueDomain URI. |

Input: |

java.net.URI |

Output: |

org.LexGrid.emf.valueDomains.PickListDefinition[] |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

getPickListValueDomain(String pickListId) |

|---|---|

Description: |

Returns an URI of the represented valueDomain of the pickList. |

Input: |

java.lang.String |

Output: |

java.net.URI |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

listPickListIds() |

|---|---|

Description: |

Returns a list of pickListIds that are available in the system. |

Input: |

none |

Output: |

java.util.List<java.lang.String> |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

resolvePickList(String pickListId, boolean sortByText) |

|---|---|

Description: |

Resolves pickList definition for supplied pickListId. |

Input: |

java.langString, |

Output: |

org.lexgrid.valuedomain.dto.ResolvedPickListEntryList |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

resolvePickListForTerm(String pickListId, String term, String matchAlgorithm, String language, String[] context, boolean sortByText) |

|---|---|

Description: |

Resolves pickList definition by applying supplied arguments. |

Input: |

java.lang.String, |

Output: |

org.lexgrid.valuedomain.dto.ResolvedPickListEntryList |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException |

Implementation Details: |

Implementation: |

Information |

removePickList(String pickListId) |

|---|---|

Description: |

Removes supplied Pick List Definition from the system. |

Input: |

java.lang.String |

Output: |

none |

Exception: |

org.LexGrid.LexBIG.Exceptions.LBException, |

Implementation Details: |

Implementation: |

Contains Coding Scheme Version reference list that was used to resolve the Value Domain and the CodedNodeSet.

The CodedNodeSet is not resolved.

A resolved Value Domain Definition containing the Coding Scheme Version reference list that was used to resolve the Value Domain and an iterator for resolved concepts.

Contains resolved Pick List Entry Nodes

Contains the list of resolved Pick List Entries. Also provides helpful features to add, remove, enumerate Pick List Entries.

Both LexEVS Value Domain and Pick List services uses org.LexGrid.LexBIG.Impl.loaders.MessageDirector to direct all fatal, error, warning, info messages with appropriate messages to the LexBIG log files in the 'log' folder of LexEVS install directory.

Along with MessageDirector, the services will also make use of org.LexGrid.LexBIG.exception.LBException to throw any fatal and error messages to the log file as well as to console.

Scripts to load Value Domain and Pick List Definitions into LexEVS system will be located under 'Admin' folder of LexEVS install directory. This loader scripts will only load data in XML file that is in LexGrid format.

LoadValueDomain.bat for Windows environment and LoadValueDomain.sh for Unix environment.

Both these scripts take in following parameters :

Parameter |

Function |

|---|---|

-in |

Input <uri> URI or path specifying location of the source file. |

-v |

Validate <int> Perform validation of the candidate resource without loading data. |

Example:

sh LoadValueDomain.sh \-in "file:///path/to/file.xml" |

LoadPickList.bat for Windows environment and LoadPickList.sh for Unix environment.

Both these scripts take in following parameters:

Parameter |

Function |

|---|---|

-in |

Input <uri> URI or path specifying location of the source file. |

-v |

Validate <int> Perform validation of the candidate resource without loading data. |

Example:

sh LoadPickList.sh \-in "file:///path/to/file.xml" |

Below is a sample XML file containing Value Domain Definitions in LexGrid format that can be loaded using LexEVS Value Domain Service.

<?xml version="1.0" encoding="UTF-8"?>

<systemRelease xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://LexGrid.org/schema/2009/01/LexGrid/versions http://LexGrid.org/schema/2009/01/LexGrid/versions.xsd"

xmlns="http://LexGrid.org/schema/2009/01/LexGrid/versions" xmlns:lgVer="http://LexGrid.org/schema/2009/01/LexGrid/versions"

xmlns:lgCommon="http://LexGrid.org/schema/2009/01/LexGrid/commonTypes" xmlns:data="data"

xmlns:lgVD="http://LexGrid.org/schema/2009/01/LexGrid/valueDomains" xmlns:lgNaming="http://LexGrid.org/schema/2009/01/LexGrid/naming"

releaseURI="http://testRelease/04" releaseDate="2008-11-07T14:55:51.615-06:00">

<lgCommon:entityDescription>Sample value domains</lgCommon:entityDescription>

<lgVer:valueDomains>

<lgVD:mappings>

<lgNaming:supportedAssociation localId="hasSubtype" uri="urn:oid:1.3.6.1.4.1.2114.108.1.8.1">hasSubtype</lgNaming:supportedAssociation>

<lgNaming:supportedCodingScheme localId="Automobiles" uri="urn:oid:11.11.0.1">Automobiles</lgNaming:supportedCodingScheme>

<lgNaming:supportedDataType localId="testhtml">test/html</lgNaming:supportedDataType>

<lgNaming:supportedDataType localId="textplain">text/plain</lgNaming:supportedDataType>

<lgNaming:supportedHierarchy localId="is_a" associationNames="hasSubtype" isForwardNavigable="true" rootCode="@">hasSubtype</lgNaming:supportedHierarchy>

<lgNaming:supportedLanguage localId="en" uri="www.en.org/orsomething">en</lgNaming:supportedLanguage>

<lgNaming:supportedNamespace localId="Automobiles" uri="urn:oid:11.11.0.1" equivalentCodingScheme="Automobiles">Automobiles</lgNaming:supportedNamespace>

<lgNaming:supportedProperty localId="textualPresentation">textualPresentation</lgNaming:supportedProperty>

<lgNaming:supportedSource localId="lexgrid.org">lexgrid.org</lgNaming:supportedSource>

<lgNaming:supportedSource localId="_111101">11.11.0.1</lgNaming:supportedSource>

</lgVD:mappings>

<lgVD:valueDomainDefinition valueDomainURI="SRITEST:AUTO:DomesticAutoMakers" valueDomainName="Domestic Auto Makers" defaultCodingScheme="Automobiles" effectiveDate="2009-01-01T11:00:00Z" isActive="true" status="ACTIVE">

<lgVD:properties>

<lgCommon:property propertyName="textualPresentation">

<lgCommon:value> Domestic Auto Makers</lgCommon:value>

</lgCommon:property>

</lgVD:properties>

<lgVD:definitionEntry ruleOrder="1" operator="OR">

<lgVD:entityReference entityCode="005" referenceAssociation="hasSubtype" transitiveClosure="true" targetToSource="false" leafOnly="false"/>

</lgVD:definitionEntry>

</lgVD:valueDomainDefinition>

<lgVD:valueDomainDefinition valueDomainURI="SRITEST:AUTO:AllDomesticButGM" valueDomainName="All Domestic Autos But GM" defaultCodingScheme="Automobiles" effectiveDate="2009-01-01T11:00:00Z" isActive="true" status="ACTIVE">

<lgVD:properties>

<lgCommon:property propertyName="textualPresentation">

<lgCommon:value> Domestic Auto Makers</lgCommon:value>

</lgCommon:property>

</lgVD:properties>

<lgVD:definitionEntry ruleOrder="1" operator="OR">

<lgVD:entityReference entityCode="005" referenceAssociation="hasSubtype" transitiveClosure="true" targetToSource="false" leafOnly="false"/>

</lgVD:definitionEntry>

<lgVD:definitionEntry ruleOrder="2" operator="SUBTRACT">

<lgVD:entityReference entityCode="GM" referenceAssociation="hasSubtype" transitiveClosure="true" targetToSource="false" leafOnly="false"/>

</lgVD:definitionEntry>

</lgVD:valueDomainDefinition>

<lgVD:valueDomainDefinition valueDomainURI="SRITEST:AUTO:AllDomesticANDGM" valueDomainName="All Domestic Autos AND GM" defaultCodingScheme="Automobiles" effectiveDate="2009-01-01T11:00:00Z" isActive="true" status="ACTIVE">

<lgVD:properties>

<lgCommon:property propertyName="textualPresentation">

<lgCommon:value> Domestic Auto Makers AND GM</lgCommon:value>

</lgCommon:property>

</lgVD:properties>

<lgVD:definitionEntry ruleOrder="1" operator="OR">

<lgVD:entityReference entityCode="005" referenceAssociation="hasSubtype" transitiveClosure="true" targetToSource="false" leafOnly="false"/>

</lgVD:definitionEntry>

<lgVD:definitionEntry ruleOrder="2" operator="AND">

<lgVD:entityReference entityCode="GM" referenceAssociation="hasSubtype" transitiveClosure="true" targetToSource="false" leafOnly="false"/>

</lgVD:definitionEntry>

</lgVD:valueDomainDefinition>

<lgVD:valueDomainDefinition valueDomainURI="SRITEST:AUTO:DomasticLeafOnly" valueDomainName="Domestic Leaf Only" defaultCodingScheme="Automobiles" effectiveDate="2009-01-01T11:00:00Z" isActive="true" status="ACTIVE">

<lgVD:properties>

<lgCommon:property propertyName="textualPresentation">

<lgCommon:value>Domestic Leaf Only</lgCommon:value>

</lgCommon:property>

</lgVD:properties>

<lgVD:definitionEntry ruleOrder="1" operator="OR">

<lgVD:entityReference entityCode="005" referenceAssociation="hasSubtype" transitiveClosure="true" targetToSource="false" leafOnly="true"/>

</lgVD:definitionEntry>

</lgVD:valueDomainDefinition>

</lgVer:valueDomains>

</systemRelease>

|

Below is a sample XML file containing Pick List Definitions in LexGrid format that can be loaded using LexEVS Pick List Service.

<?xml version="1.0" encoding="UTF-8"?>

<systemRelease xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://LexGrid.org/schema/2009/01/LexGrid/versions http://LexGrid.org/schema/2009/01/LexGrid/versions.xsd"

xmlns="http://LexGrid.org/schema/2009/01/LexGrid/versions" xmlns:lgVer="http://LexGrid.org/schema/2009/01/LexGrid/versions"

xmlns:lgCommon="http://LexGrid.org/schema/2009/01/LexGrid/commonTypes" xmlns:data="data"

xmlns:lgVD="http://LexGrid.org/schema/2009/01/LexGrid/valueDomains" xmlns:lgNaming="http://LexGrid.org/schema/2009/01/LexGrid/naming"

releaseURI="http://testRelease/04" releaseDate="2008-11-07T14:55:51.615-06:00">

<lgCommon:entityDescription>Sample value domains</lgCommon:entityDescription>

<pickLists>

<lgVD:mappings>

<lgNaming:supportedCodingScheme localId="Automobiles" uri="urn:oid:11.11.0.1">Automobiles</lgNaming:supportedCodingScheme>

<lgNaming:supportedLanguage localId="en" uri="www.en.org/orsomething">en</lgNaming:supportedLanguage>

<lgNaming:supportedNamespace localId="Automobiles" uri="urn:oid:11.11.0.1" equivalentCodingScheme="Automobiles">Automobiles</lgNaming:supportedNamespace>

<lgNaming:supportedProperty localId="textualPresentation">textualPresentation</lgNaming:supportedProperty>

<lgNaming:supportedSource localId="lexgrid.org">lexgrid.org</lgNaming:supportedSource>

<lgNaming:supportedSource localId="_111101">11.11.0.1</lgNaming:supportedSource>

</lgVD:mappings>

<lgVD:pickListDefinition pickListId="SRITEST:AUTO:DomesticAutoMakers" representsValueDomain="SRITEST:AUTO:DomesticAutoMakers" isActive="true" defaultEntityCodeNamespace="Automobiles" defaultLanguage="en" completeDomain="false">

<lgCommon:owner>Owner for Domestic Auto Makers</lgCommon:owner>

<lgCommon:entityDescription>DomesticAutoMakers</lgCommon:entityDescription>

<lgVD:mappings>

<lgNaming:supportedCodingScheme localId="Automobiles" uri="urn:oid:11.11.0.1">Automobiles</lgNaming:supportedCodingScheme>

<lgNaming:supportedDataType localId="texthtml">text/html</lgNaming:supportedDataType>

<lgNaming:supportedDataType localId="textplain">text/plain</lgNaming:supportedDataType>

<lgNaming:supportedLanguage localId="en" uri="www.en.org/orsomething">en</lgNaming:supportedLanguage>

<lgNaming:supportedNamespace localId="Automobiles" uri="urn:oid:11.11.0.1" equivalentCodingScheme="Automobiles">Automobiles</lgNaming:supportedNamespace>

<lgNaming:supportedProperty localId="textualPresentation">textualPresentation</lgNaming:supportedProperty>

<lgNaming:supportedSource assemblyRule="rule1" uri="http://informatics.mayo.edu" localId="lexgrid.org">lexgrid.org</lgNaming:supportedSource>

<lgNaming:supportedSource localId="_111101">11.11.0.1</lgNaming:supportedSource>

</lgVD:mappings>

<lgVD:pickListEntryNode pickListEntryId="PLGMp1" isActive="true">

<lgCommon:owner>Owner for PLGMp1</lgCommon:owner>

<lgCommon:entryState containingRevision="R001" relativeOrder="1" changeType="NEW" prevRevision="R00A"/>

<lgVD:inclusionEntry entityCode="GM" entityCodeNamespace="Automobiles" propertyId="p1">

<lgVD:pickText>General Motors</lgVD:pickText>

</lgVD:inclusionEntry>

<lgVD:properties>

<lgCommon:property propertyName="textualPresentation" isActive="true" language="en" propertyId="p1" propertyType="presentation" status="active" effectiveDate="2001-12-17T09:30:47Z" expirationDate="2011-12-17T09:30:47Z">

<lgCommon:owner role="role" subRef="subref">General Motors</lgCommon:owner>

<lgCommon:entryState containingRevision="R001" relativeOrder="1" changeType="NEW" prevRevision="R00A"/>

<lgCommon:source subRef="subref1" role="role1">General Motors</lgCommon:source>

<lgCommon:value dataType="textplain">Property for General Motors</lgCommon:value>

</lgCommon:property>

</lgVD:properties>

</lgVD:pickListEntryNode>

<lgVD:pickListEntryNode pickListEntryId="PLGMp2" isActive="true">

<lgCommon:owner>Owner for PLGMp2</lgCommon:owner>

<lgCommon:entryState containingRevision="R001" relativeOrder="1" changeType="NEW" prevRevision="R00A"/>

<lgVD:inclusionEntry entityCode="GM" entityCodeNamespace="Automobiles" propertyId="p2">

<lgVD:pickText>GM</lgVD:pickText>

</lgVD:inclusionEntry>

</lgVD:pickListEntryNode>

<lgVD:pickListEntryNode pickListEntryId="PLJaguarp1" isActive="true">

<lgCommon:owner>Owner for PLJaguarp1</lgCommon:owner>

<lgCommon:entryState containingRevision="R001" relativeOrder="1" changeType="NEW" prevRevision="R00A"/>

<lgVD:inclusionEntry entityCode="Jaguar" entityCodeNamespace="Automobiles" propertyId="p1">

<lgVD:pickText>Jaguar</lgVD:pickText>

</lgVD:inclusionEntry>

</lgVD:pickListEntryNode>

<lgVD:pickListEntryNode pickListEntryId="PLChevroletp1" isActive="true">

<lgCommon:owner>Owner for PLChevroletp1</lgCommon:owner>

<lgCommon:entryState containingRevision="R001" relativeOrder="1" changeType="NEW" prevRevision="R00A"/>

<lgVD:inclusionEntry entityCode="Chevy" entityCodeNamespace="Automobiles" propertyId="p1">

<lgVD:pickText>Chevrolet</lgVD:pickText>

</lgVD:inclusionEntry>

</lgVD:pickListEntryNode>

</lgVD:pickListDefinition>

<lgVD:pickListDefinition pickListId="SRITEST:AUTO:DomasticLeafOnly" representsValueDomain="SRITEST:AUTO:DomasticLeafOnly" completeDomain="true" defaultEntityCodeNamespace="Automobiles" defaultLanguage="en" isActive="true">

<lgCommon:entityDescription>Leaf Only Nodes of Domastic AutoMakers</lgCommon:entityDescription>

</lgVD:pickListDefinition>

</pickLists>

</systemRelease>

|

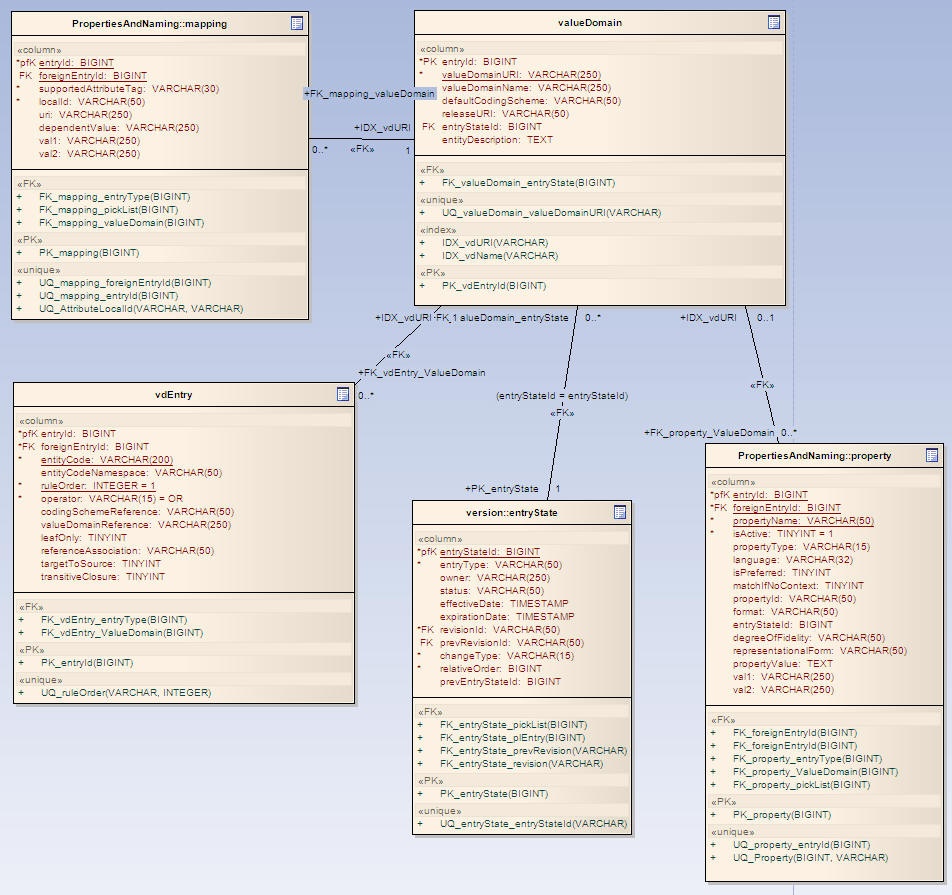

Table Name |

Description |

|---|---|

valueDomain |

Will contain Value Domain Definition information |

vdEntry |

Contains Value Domain Entries information and its rules |

entryState |

Contains entry state details of every entry |

mappings |

Contains supported mapping information for a Value Domain Definition |

property |

Contains Property informations for Value Domain Definition |

Table Name |

Description |

|---|---|

pickList |

Will contain Pick List Definition information |

plEntry |

Contains Pick List Entry Nodes information |

entryState |

Contains entry state details of every entry |

mappings |

Contains supported mapping information for a Pick List Definition |

property |

Contains Property informations for Pick List Definition and its Nodes |

Both LexEVS Value Domain and Pick List services are integrated part of core LexEVS API and will be packaged and installed with other LexEVS services.

The System test case for the LexEVS Value Domain service is performed using the JUnit test suite:

org.LexGrid.LexBIG.Impl.testUtility.VDAllTests

This test suite will be run as part of regular LexEVS test suites AllTestsAllConfigs and AllTestsNormalConfigs.

The System test case for the LexEVS Value Domain service is performed using the JUnit test suite:

org.LexGrid.LexBIG.Impl.testUtility.PickListAllTests

This test suite will be run as part of regular LexEVS test suites AllTestsAllConfigs and AllTestsNormalConfigs.

This document provides the detailed design and implementation of LexBIG Enterprise Vocabulary Service (LexEVS) Loader Framework Extension. It is also the goal of this document to provide enough information to allow those persons wishing to create their own loaders can do so. This document will also assume the reader is already familiar with the LexEVS software.

The LexEVS software already provides a set of loaders within an existing legacy framework which served LexEVS developers well over many years. But as LexEVS has gained users, and requests for new loaders has grown , it was decided that a new loader framework should be developed that would: (1) be easier to extend (2) provide improved performance (3) dynamic loading of new loaders (4) take advantage of proven open source components such as Spring Batch and Hibernate.

Specifically, this development work addresses "TASK 6 - IMPROVE LEXEVS LOADING FRAMEWORK" in the National Cancer Institute (NCI) Statement of Work (SOW) document (reference ?????).

Also, this Framework is completely independent of the current loader code so there is no impact to current loaders.

The LexEVS Loader Framework will provide a way for LexEVS developers to write new loaders and have them recognized dynamically by the LexEVS code. Also the framework will provide help to loader developers in the form of utility classes and interfaces.

The LexEVS Loaders Framework extend the functionality of LexBIG 5.0 . For more information on LexBIG, refer to LexEVS 5.0.

High Level Overview

The following figure shows the major components of the Loader Framework (A) in relation to a hypothetical new loader and what expected API usage would be. Ideally, the new loader can find make most if its API calls through the utilities provided by the Loader Framework API (B). Some work will need to be done with Spring (C) such as configuration of a Spring config file. Also it may or may not be necessary for a loader to use Hibernate (D) or use the LexBIG API (E). However, again, the hope is that much of the work a new loader may need to do can be accomplished by the Loader Framework API.

The Loader Framework utilizes Spring Batch for managing its Java objects to improve performance and Hibernate provides the mapping to the LexGrid database.

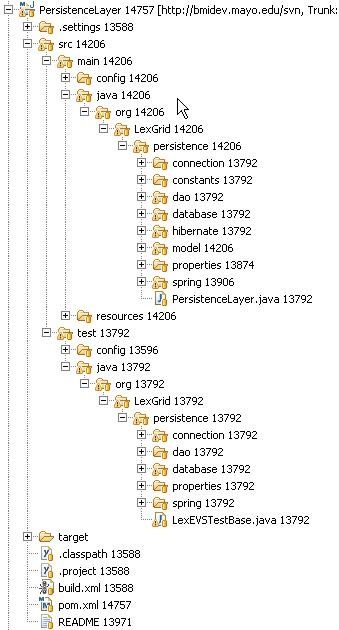

The Loader Framework code is available in the NCI Subversion (SVN) repository. It is comprised of three Framework projects. Also at the time of this writing there are three projects in the repository that utilize the Loader Framework. These projects utilize Maven for build and dependency management.

Loader Framework Projects

Loader Proejcts Using the New Framework

Maven

The above projects are built and managed by Maven.

Maven plugin for Eclipse: http://m2eclipse.codehaus.org/

So you want to write a loader and use the Loader Framework. What are the key considerations?

In general the process can be described as:

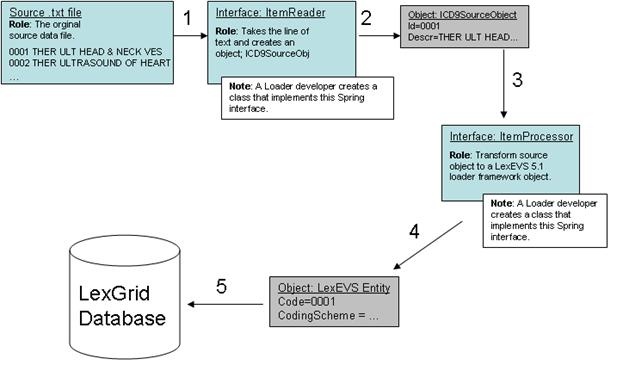

An example may help in understanding the Framework. Our discussion will refer to the following figure. Lets say we are writing a loader to load the ICD-9-CM codes and their description which are contained in a text file. We know we'll need a data structure to hold the data after we've read it so we have a class:

ICD9SourceObject {

String id;

String descr;

String getId() { return id; }

}

|

Enter Spring. The Loader Framework uses Spring Batch to manage the reading, processing and writing of data. Spring provides classes and interfaces to help do this work and the Loader Framework also provides utilities to help loader developers. In our example, we will write a class that will use the Spring ItemReader interface. It will take a line of text and return an ICD9SourceObject (1 and 2). Next we'll want to process that data into a LexEVS object such as an Entity object. So we'll write class that implements Spring's ItemProcessor interface. It will take our ICD9SourceObject and output a LexEVS Entity object (3,4). Finally, we'll want to write the data to the database (5). Note that the LexEVS model objects provided in the Loader Framework are generated by Hibernate and utilize Hibernate to write the data to the database. This will free us from having to write SQL.

Spring

You will need to configure Spring to be aware of your objects and how to manage them. This is done via a XML configuration file. More details on the Spring config file below.

ItemReader/ItemProcessor

You will either write a class implementing this interface or use one of the Spring helper classes that already implement this interface. If you use one of the Spring classes you may need to provide one of your own helper classes to construct your internal data structure object, such as ICD9SourceObject. You would provide it to the Spring object via a setProperty call configured in the Spring config file.

Maven Set up

The projects containing the Loader Framework (PersistanceLayer , loader-framework , and loader-framework-core) use Maven for dependency management and build. You will still use Eclipse as your IDE and code repository but you will need to install a Maven plugin for Eclipse which can be found at: http://m2eclipse.sonatype.org/

After the plugin is installed you'll need to provide a URL and userid/password to a Maven repository on a server (which manages your dependencies or dependent jar files). Ours here at Mayo is:

http://bmidev4:8282/nexus-webapp-1.3.3/index.html

Once Maven is configured you can import the Loader Framework classes from SVN. Upon doing that you will most likely see build errors about missing jars. Resolve those by right clicking on the project with errors, select 'Maven', and 'Resolve Dependencies'. This will pull the dependant jars from the Maven repository into your local environment.

To build a Maven project, right click on the project, select 'Maven', then select 'assembly:assembly'.

Eclipse Project Set up

When loader developers create a new loader project in Eclipse it is recommended they follow the Maven directory structure. By following this convention Maven can build the project and find the test cases.

From the Maven documentation:

For more information on the Maven project refer to the documentation on apache.org.

Configure your Spring Config (myLoader.xml)

Spring is a lightweight bean management container and among other things it contains a batch function which is utilized by the Loader Framework. A loader using the framework will need to work closely with Spring Batch and the way it does that is through Spring's configuration file where you configure beans (your loader code) and how the loader code should be utilized by Spring Batch (by configuring a Job, Step and other Spring Batch stuff in the spring config file). What follows is a brief overview of those tags related to the LoaderFramework. For more detail refer to the Spring documentation.

Beans

The 'beans:beans' tag is the all-encompassing tag. You define all your other tags in here. You can also define an import within this tag to import an external Spring config file. Not shown in figure 3.

Bean

Use these tags, 'beans:bean', to define the beans to be managed by the Spring container by specifying the packaged qualified class name. You can also specify inititialization values and set bean properties within these tags.

<beans:bean id="umlsCuiPropertyProcessor" parent="umlsDefaultPropertyProcessor" class="org.lexgrid.loader.processor.EntityPropertyProcessor"> <beans:property name="propertyResolver" ref="umlsCuiPropertyResolver" /> </beans:bean> |

Job

The 'job' tag is the main unit of work. The job is comprised of one or more steps that define the work to be done. Other advanced and interesting things can be done within the Job such as using 'split' and 'flow' tags to indicate work that can be done in parellel steps to improve performance.

<job id="umlsJob" restartable="true"> <step id="populateStagingTable" next="loadHardcodedValues" parent="stagingTablePopulatorStepFactory"/> ... |

Step

One or more step tags make up a job and can very from simple to complex in content. Among other things, you can specify which step should be executed next.

Tasklet

You can do anything you want within a Tasklet such as sending an email or a LexBIG function such as indexing. You're not limited to just database operations. The Spring documentation also has this to say about Tasklets:

The Tasklet is a simple interface that has one method, execute, which will be a called repeatedly by the TaskletStep until it either returns RepeatStatus.FINISHED or throws an exception to signal a failure. Each call to the Tasklet is wrapped in a transaction |

Chunk

Spring documentation says it best:

Spring Batch uses a 'Chunk Oriented' processing style within its most common implementation. Chunk oriented processing refers to reading the data one at a time, and creating 'chunks' that will be written out, within a transaction boundary. One item is read in from an ItemReader, handed to an ItemWriter, and aggregated. Once the number of items read equals the commit interval, the entire chunk is written out via the ItemWriter, and then the transaction is committed. |

Reader

An attribute of the chunk tag. Here is the class that you defined implementing the Spring ItemReader interface to read data from your data file and create domain-specific objects.

Processor

Another attribute of the chunk tag. This is the class that implements the ItemProcessor interface where other manipulations of the domain objects take place. In the case of the Loader Framework we create LexGrid model objects from the domain objects so that they can be written to the database via Hibernate. Note that this is not a required attribute. In theory if you had a data source you could read from such that you could create LexBIG object immediately you would not have need of a processor. In practice this is most likely not be the case. You will need to work with the data to get it into LexBIG objects.

Writer

Attribute of the chunk tag. This class will implement the Spring interface ItemWriter. In the case of the Loader Framework these classes have been written for you. They are the LexGrid model objects that use Hibernate to write to the database.

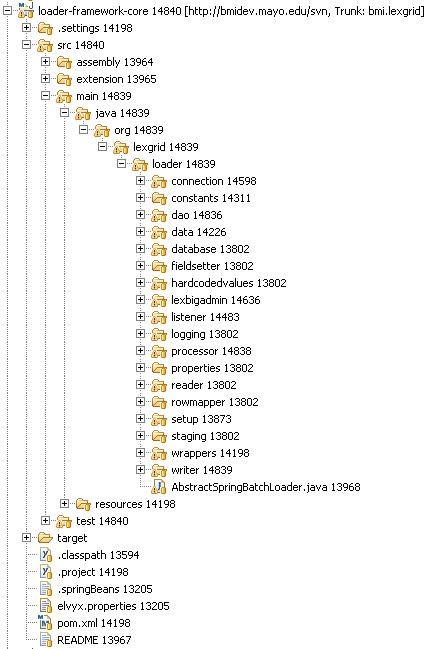

Below is an image of the loader-framework-core project in Eclipse which shows the key directories of the Loader Framework. The following is a summary of the contents of those directories.

Directory |

Summary |

|---|---|

connection |

Connect to LexBIG and do LexBIG tasks such as register and activate. |

constants |

Assorted constants. |

dao |

Access to the LexBIG database. |

data |

Directly related to data going into the LexBIG database tables. |

database |

Database specific tasks not related to data, such as finding out the database type (MySQL, Oracle) |

fieldsetter |

Spring related classes for helping to write to the database. |

lexbigadmin |

Common tasks you want LexBIG to do for you such as indexing. |

listener |

You can attach listeners to a load so that the code will execute and certain points in the load such as a cleanup listener that runs when the load is finished or a setup listener etc... |

logging |

Gives you access to the LexBIG logger. |

processor |

Important directory. Contains classes that you can pass your domain specific object to and will return a LexBIG object. |

properties |

Code used internally by the Loader Framework. |

reader |

Readers and reader-related tools for loader developers. |

rowmapper |

Classes for reading from a database. Currently experimental code. |

setup |

Classes such as JobRepositoryManager that help Spring do its work. As Spring hums along it keeps tables of its internal workings. Loader developers should not need to dive into this directory. |

staging |

If your loader needs to load data to the database temporarlily you can find helper classes in this directory. |

wrappers |

Helper classes and data strucutres such as a Code/CodingScheme class. |

writer |

Miscellaneous classes that write to the database. These are not the same ones you'd use in your loader, i.e the LexBIG model objects that use Hibernate. Those classes are contained in the PersistenceLayer project (next figure). It is by using those classes in the PersistenceLayer that you let the Loader Framework do some of the heavy lifting for you. |

None

None

Spring Batch gives the Loader Framework some degree of recovery from errors. Like the other features of Spring it is something the Loader developer would need to configure in their Spring config file. Basically, Spring will keep track of the steps it has executed and make note of any step that has failed. Those failed steps can be re-run at a later time. The Spring documentation provides additional information on this function. Refer to the configure job documentation and configure step documentation.

None